Virtualization is a massively growing aspect of computing and IT creating "virtually" an unlimited number of possibilities for system administrators. Virtualization has been around for many years in some form or the other, the trouble with it, is being able to understand the different types of virtualization, what they offer, and how they can help us.

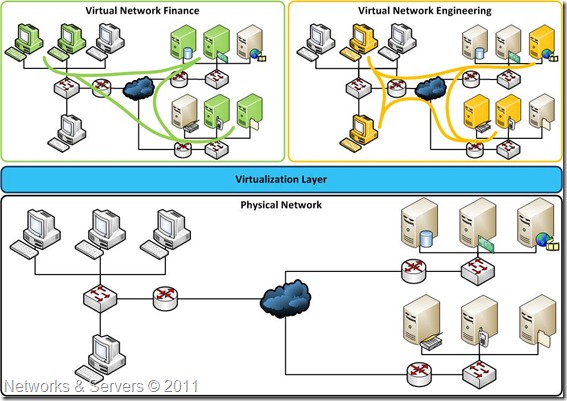

Virtualization has been defined as the abstraction of computer resources or as a technique for hiding the physical characteristics of computing resources from the way in which other

systems, applications, or end users interact with those resources. In fact, virtualization always means abstraction. We make something transparent by adding layers that handle translations, causing previously important aspects of a system to become moot.

Storage Virtualization

The amount of data organizations are creating and storing is rapidly increasing due to the shift of business processes to Web-based digital applications and this huge amount of data is causing problems for many of them. First, many applications generate more data than can be stored physically on a single server. Second, many applications, particularly Internet-based ones, have multiple machines that need to access the same data. Having all of the data sitting on one machine can create a bottleneck, not to mention presenting risk from the situation where many machines might be made inoperable if a single machine containing all the application’s data crashes. Finally, the increase in the number of machines causes backup problems because trying to create safe copies of data is a tremendous task when there are hundreds or even thousands of machines that need data backup.

For these reasons, data has moved into virtualization. Companies use centralized storage (virtualized storage) as a way of avoiding data access problems. Furthermore, moving to centralized data storage can help IT organizations reduce costs and improve data management efficiency. The basic premise of storage virtualization solutions is not new. Disk storage has long relied on partitioning to organize physical disk tracks and sectors into clusters, and then abstract clusters into logical drive partitions (e.g., the C: drive). This allows the operating system to read and write data to the local disks without regard to the physical location of individual bytes on the disk platters.

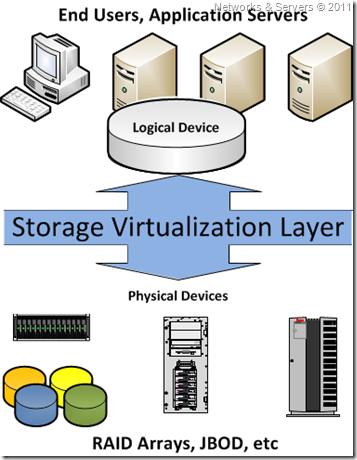

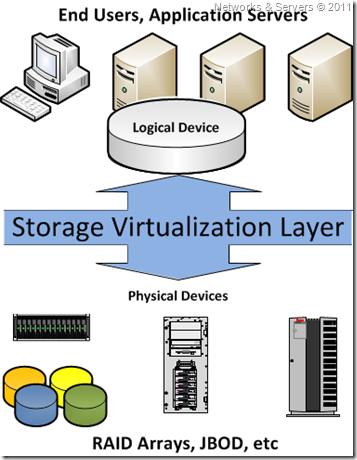

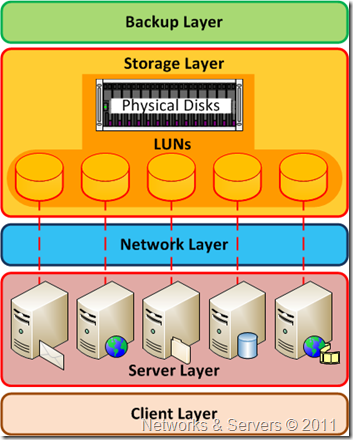

Storage virtualization creates a layer of abstraction between the operating system and the physical disks used for data storage. The virtualized storage is then location-independent, which can enable more efficient use and better storage management. For example, the storage virtualization software or device creates a logical space, and then manages metadata that establishes a map between the logical space and the physical disk space. The creation of logical space allows a virtualization platform to present storage volumes that can be created and changed with little regard for the underlying disks.

The storage virtualization layer is where the resources of many different storage devices are pooled so that it looks like they are all one big container of storage. This is then managed by a central system that makes it all look much simpler to the network administrators. This is also a great way to monitor resources, as you can then see exactly how much you have left at a given time giving much less hassle when it comes to backups etc.

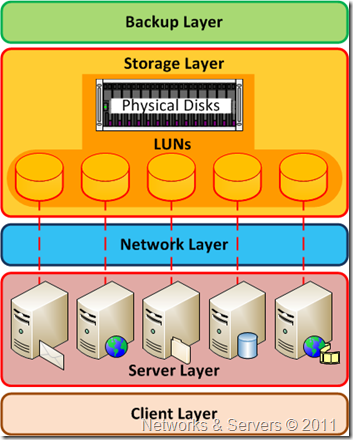

In most data centers, only a small percentage of storage is used because, even with a

SAN, one has to allocate a full disk logical unit number (LUN) to the server (or servers) attaching to that LUN. Imagine that a LUN fills up but there is disk space available on another LUN. It is very difficult to take disk space away from one LUN and give it to another LUN. Plus, it is very difficult to mix and match storage and make it appear all as one.

Storage virtualization works great for mirroring traffic across a WAN and for migrating LUNs from one disk array to another without downtime. With some types of storage virtualization, for instance, you can forget about where you have allocated data, because migrating it somewhere else is much simpler. In fact, many systems will migrate data based on utilization to optimize performance.